With the Pandora’s box of AI cracked wide open, technologists and ethicists alike are scrambling to find ways to combat AI-generated deepfakes. The White House now says it is exploring the use of cryptographic technology to designate genuine content.

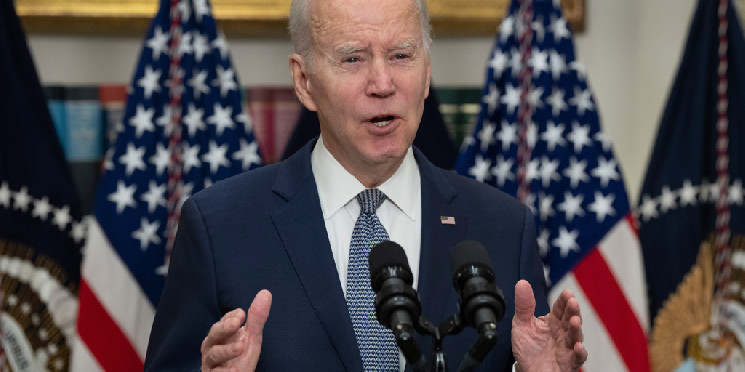

“We recognize that increasingly powerful technology makes it easier to do things like clone voices or fake videos,” White House Special Advisor on AI Ben Buchanan told Business Insider earlier this month. “We want to not inhibit the creativity that could come from having more powerful tools while also making sure we manage some of the risks.”

Buchanan said that after the Biden administration met with over a dozen AI developers in 2023, the companies agreed to embed watermarks in their products before releasing them to the public. However, watermarks can be manipulated and removed entirely, making the inverse approach attractive.

“[On] the government side, we're in the process of developing watermarking standards through the new AI Safety Institute and the Department of Commerce to make sure that we have a clear set of rules of the road and guidance here for how do we address some of these thorny watermarking and content, provenance problems,” Buchanan told Yahoo Finance.

A White House spokesperson did not immediately respond to a request for comment from Decrypt.

In December, in an initiative to combat AI-generated images, digital image giants Nikon, Sony, and Canon announced a partnership to include a digital signature in images taken with their respective cameras.

Last week, the Biden Administration announced the launch of the U.S. AI Safety Institute with participants including OpenAI, Microsoft, Google, Apple, and Amazon. The Institute, the administration said, was born out of Biden’s executive order directed at the AI industry in October.

Developing AI to detect AI deepfakes has become a cottage industry. However, some argue against the practice, saying it will only make the problem worse.

“Using AI to detect AI will not work. Instead, it creates a never-ending arms race. The bad guys will always win," co-founder and CEO of identity verification company IDPartner Rod Boothby told Decrypt. "The solution is to flip the problem and enable people to prove their identity online. Using bank identity is the clear solution to the problem.”

Boothby pointing to the banking sector’s use of “continuous authentication” to ensure that the person on an anonymous internet session is who they claim to be.

For cybersecurity and legal scholar Star Kashman, protecting ourselves from deepfakes goes back to awareness.

“Especially for robocalls and AI-generated phone scams, raising awareness can prevent a lot of damage,” Kashman told Decrypt in an email. “ If your family is informed of a common AI voice phone scam where scammers call pretending they kidnapped a family member, and they use AI to imitate that family member's voice, then the individual receiving the call may know to check on that relative prior to paying a fake ransom for someone who was never missing.”

Advances in generative AI have made it increasingly easy to scam and fool the general public, evidenced more recently in a robocall campaign that attempted to keep Democratic voters in New Hampshire from participating in the primary election last month.

The Biden robocall was traced to a Texas-based telecom company. After issuing a cease and desist order to Lingo Telecom LLC., the U.S. Federal Communications Commission passed a new rule making robocalls using AI voices illegal.

While Kashman said awareness is still the best way to avoid being scammed by AI deepfakes, she acknowledged that the threat requires government intervention.

“As for deep fakes, knowledge does not prevent individuals from creating these of you,” Kashman said. “However, knowledge can add more pressure to the government which is needed to pass legislature making the creation of illicit non-consensual deep fakes federally illegal.”

decrypt.co

decrypt.co