Is it Possible to Cheat the Face Recognition System: Meet the Expert

Who tries to cheat the facial recognition system most often? Usually, these are people who do not want to be detected by security cameras and which have problems with the law — from petty thieves in supermarkets to major international criminals.

Sometimes developers of recognition technology try to "hack" the system themselves — solely for research purposes. They create workarounds and look for weaknesses in the system to improve it in the future.

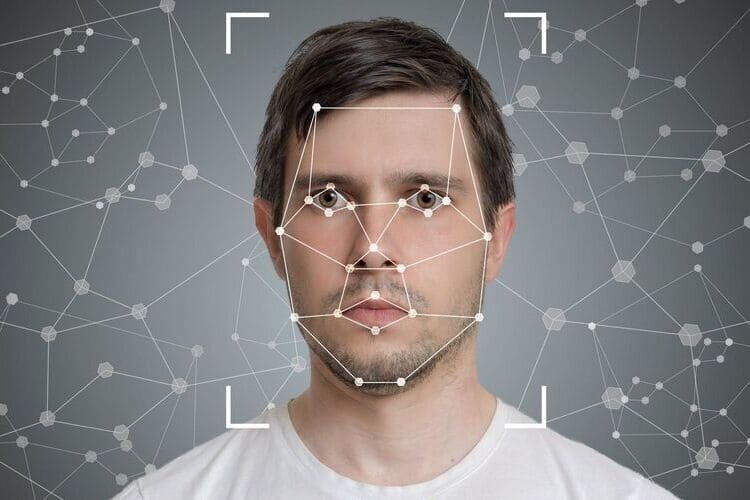

In fact, neural network algorithms are developing at such a speed that they can often recognize people more effectively than the human eye. And every time there are new attempts to cheat the system, but most of them fail to withstand scrutiny. I will speak about the most common cheating techniques.

Method # 1. Hood, billed cap, brim hat

A headdress can deceive the program only if the camera is hanging high and its angle of view is such that the brim or bill covers most of the face (it is considered that the upper part of the face — the eyes and everything around them — is the most informative for the neural network). But if it is possible to hang the camera correctly, and the person does not lower his face too low, the neural network will be able to recognize it.

Method # 2. Sunglasses and diopter glasses

Ordinary glasses with diopters (or not too darkened) do not constitute any problem for cameras at all. Today, only very large, black glasses that hide most of the face can affect the accuracy of recognition. But even this does not mean that you are completely hidden. Most likely, the system will recognize you with a slightly lower probability.

Method # 3. Reflector glasses

Such glasses are reflected in the infrared range. But if we are talking about cameras that are designed to search for people in terms of security, they work in different ranges. It is enough to change the sensitivity of certain detectors a little — and everything becomes visible.

Method # 4. Medical masks

During the pandemic, we quickly trained algorithms to recognize faces covered with masks. The recognition accuracy has dropped, but not critically. Wearing a mask will only affect the detection of your gender and age by algorithms.

Method # 5. Makeup

One way to trick the system with makeup is to prevent the algorithm from understanding that it is looking at a face. For this purpose, activists draw exotic patterns and make geometric makeup. It is clear that light makeup does not cope with this task. You can reduce the recognition accuracy if you whiten your face very much: hide the relief, make bright eye makeup that will change their shape. This really works with normal cameras when the person is far away. But if you use higher-resolution cameras and place them closer, and if a person lingers in front of the camera for at least a few seconds, this makeup will not help.

We tested the use of old-age makeup. And in this case, the neural network is indeed unable to accurately determine the age of a person if the makeup is done skillfully. But it recognizes the face as such without any problems. If we talk about ordinary makeup, it is, of course, not an obstacle to recognition at all.

Method # 6. Silicone masks

Silicone masks are the most effective way to cheat technology. Today, it is impossible to identify the person behind such a mask. Here, the developers have a different task: you need to determine whether you are looking at a person wearing a mask, and not a real face. This is a separate area called anti-spoofing. If the system does not have an anti-spoofing algorithm, it is easily deceived by a photo, a fake video, etc.

About the author

Yuri Godina is the founder of Facemetric. In 2006, he graduated from Bauman Moscow State Technical University with a degree in ECT Design and Technology, later completing his post-graduate studies at MESI with a PhD in Economics. In 2015, he received an MBA from MIRBIS. In 2014, Yuri created a company called Facemetric. In 2015, the company launched pilot projects with its first clients.

Image courtesy of Pinterest