Metaverse holiday, anyone? It will be here soon. A new robotic avatar made in Italy is turning fantasy into reality.

A robot is showing us the possibilities of how intensely we can feel life in the final digital frontier.

This little chap is called the iCub robot advanced telexistence system, also called the iCub3 avatar system.

The boffins working on this are researchers at the Italian Institute of Technology in Genova, Italy. The idea is that this robot will be sent into an actual place, to feel, hear, see, experience, on a human’s behalf. All the while the human is at home, safe and comfortable. This is more than virtual reality, however. This is what comes AFTER virtual reality.

Metaverse holiday: Testing the prototype

Recently, the researchers tested the robot in a demonstration involving a human operator based in Genova. The iCub robot was sent 300kms away to visit the International Architecture Exhibition – La Biennale di Venezia. Communication relied on basic optical fiber connection.

The human back in Genova felt everything the robot did: feedback was visual, auditory, haptic and touch. This is the first time that a system with all these features has been tested using a legged humanoid robot for remote tourism. The human operator could feel and experience everything the robot (or avatar) did.

While this system is a prototype, it shows the spectacular potential for the use of this kind of augmented reality in the future. Different scenarios could include a disaster response, like sending in the robot to an unstable building to rescue a human. Or to healthcare, where a surgeon could remotely operate. Or for fun. The potential for the using this prototype in the metaverse has endless uses.

Metaverse holiday goals

Daniele Pucci is the Principal Investigator of the Artificial and Mechanical Intelligence (AMI) Lab at IIT in Genova. One of their research goals is to obtain humanoid robots that play the role of avatars. They want a robotic body that acts in place of humans, without substituting them. But allowing humans to be where they can’t usually be is the goal.

Pucci says that there are two areas in which the robot can be used in the metaverse. “The iCub3 avatar system uses wearable technologies and algorithms. These are used to control the physical avatar iCub3. So they can be used to control digital avatars in the Metaverse.”

There’s also a second use. “The algorithms and simulation tools that the researchers developed for controlling the physical avatar, represent a testbed. We can make better digital avatars that behave more naturally and closer to reality.”

Pucci says that to control the physical avatar, human motion needs to be properly interpreted by the avatar. This transformation takes place in a digital ecosystem, a simplified metaverse. “Before transmitting data from the human to the avatar, researchers exploit a simplified metaverse to ensure that the robot motions are feasible in the real world.”

So, how soon is this going to be available for home purchase?

The iCub3 Avatar System consists in two main components.

1) The operator system, namely, the wearable technologies for the operator. These are either commercially available products or prototypes at advanced stages, with pre-compliance tests passed. So, they may be very soon available as a whole.

2) The avatar system – the iCub3 – is a prototype that needs to start a certification process, according to how it will be used.

Says Pucci, “We see the metaverse as the internet was during the nineties. Everybody thought that internet was a breakthrough, but its applications were not really clear till 2000. So, we believe that the real applications for the metaverse will shape in the coming years.”

Future potential

Pucci says that this research direction has tremendous potential in many fields. “On the one hand, the recent pandemic taught us that advanced telepresence systems might become necessary very quickly across different fields, like healthcare and logistics. On the other hand, avatars may allow people with severe physical disabilities to work and accomplish tasks in the real world via the robotic body. This may be an evolution of rehabilitation and prosthetics technologies.”

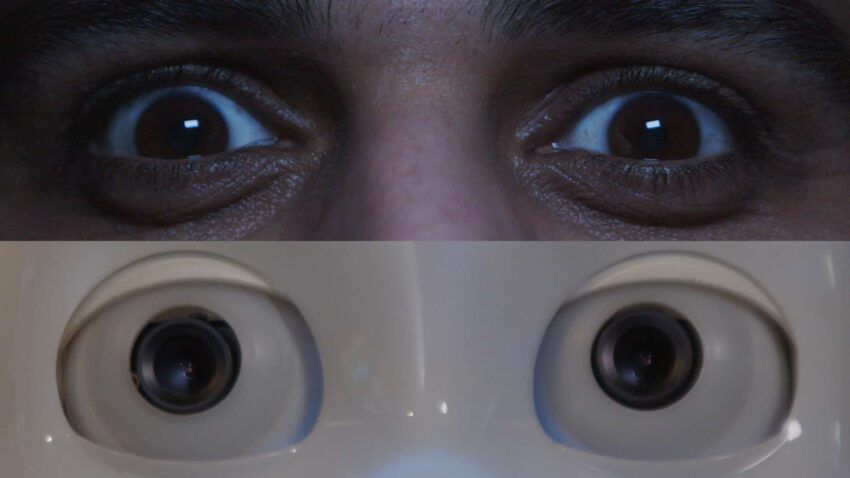

In the demonstration, the iFeel suit tracked the human’s body motions and the avatar system transferred them onto the robot in Venice. The robot then moves as the user does. The human’s headset tracks expressions, eyelids, and eye motions.

These head features are projected onto the avatar, which reproduces them with a high level of fidelity. The avatar and the human share very similar facial expressions. The user wears sensorized gloves that track his hand motions and, at the same time, provide haptic feedback.

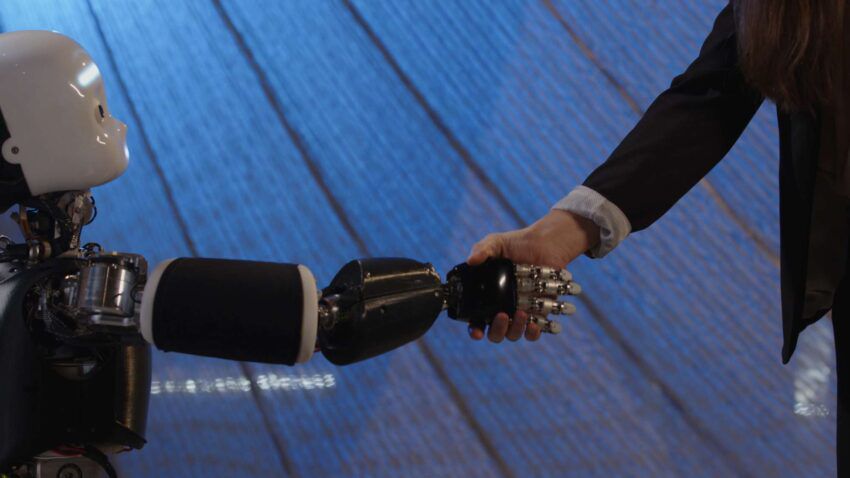

The remote user transfers normal actions to the robot: it can smile, talk, and shake the hand of the guide in Venice. When the guide hugs the avatar in Venice, the operator in Genova feels the hug thanks to the IIT’s iFeel suit.

Application to the metaverse

Says Pucci, “What I see in our near future is the application of this system to the so-called metaverse, which is actually based on immersive and remote human avatars.”

This sounds like a really, really fun future.

beincrypto.com

beincrypto.com